How to Monitor How AI Engines Describe Your Company in Real Time

Track how ChatGPT, Perplexity, and other AI engines describe your company, detect inaccuracies, and measure improvement with a repeatable workflow.

The Silent Revenue Loss of the Visibility Gap

As of April 2026, generative engine optimization (GEO) has rapidly evolved from an experimental marketing tactic to a fundamental pillar of corporate reputation management. SE Ranking reports that approximately 60% of all organic search traffic is now derived directly from AI-generated responses. When consumers ask an AI engine for software recommendations, the resulting answer often serves as the definitive final touchpoint before a purchasing decision.

However, unlike traditional search engine results pages (SERPs) which offer relatively stable rankings, generative AI outputs are intensely erratic. Research shows that only 30% of brands are able to maintain visibility between consecutive AI-generated answers for the exact same prompt.

The visibility gap refers to the untracked loss of brand awareness that occurs when AI engines omit your company without generating analytics data. Because many brands are not mentioned in these AI answers, they lose vital pipeline opportunities "silently," completely invisible to traditional analytics suites. Bridging this gap requires evaluating modern Brand Monitoring Tools for AI Search Engines that specifically crawl conversational outputs.

The Volatility of AI Search

- 60% of organic traffic now comes from AI responses.

- Only 30% of brands maintain visibility between consecutive prompts.

- Visibility drops to 20% over five consecutive prompt runs.

How ChatGPT and Perplexity Process Brand Information

To effectively track brand narratives, organizations must understand the underlying technical architecture of the major generative platforms. Understanding How AI Engines Recommend Brands is the critical first step before launching any monitoring initiative.

| Feature Profile | ChatGPT | Perplexity |

|---|---|---|

| Market Share (2026) | 59.47% | 2.55% |

| Core Architecture | Parametric Memory + Search | Retrieval-Augmented Generation (RAG) |

| Citation Style | Occasional, often unlinked | Persistent, explicit external links |

| Correction Method | High-volume entity association | Targeted authority link building |

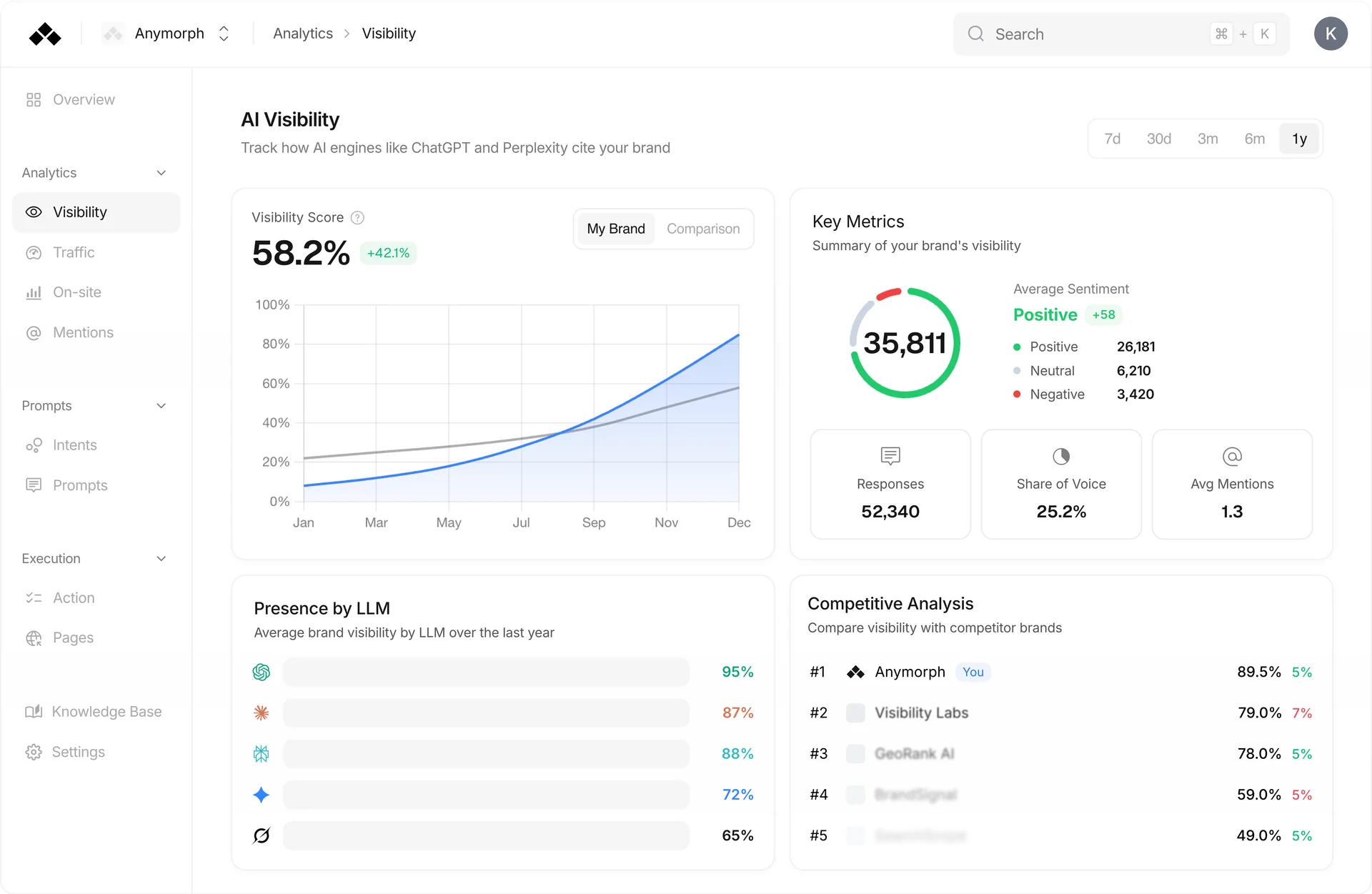

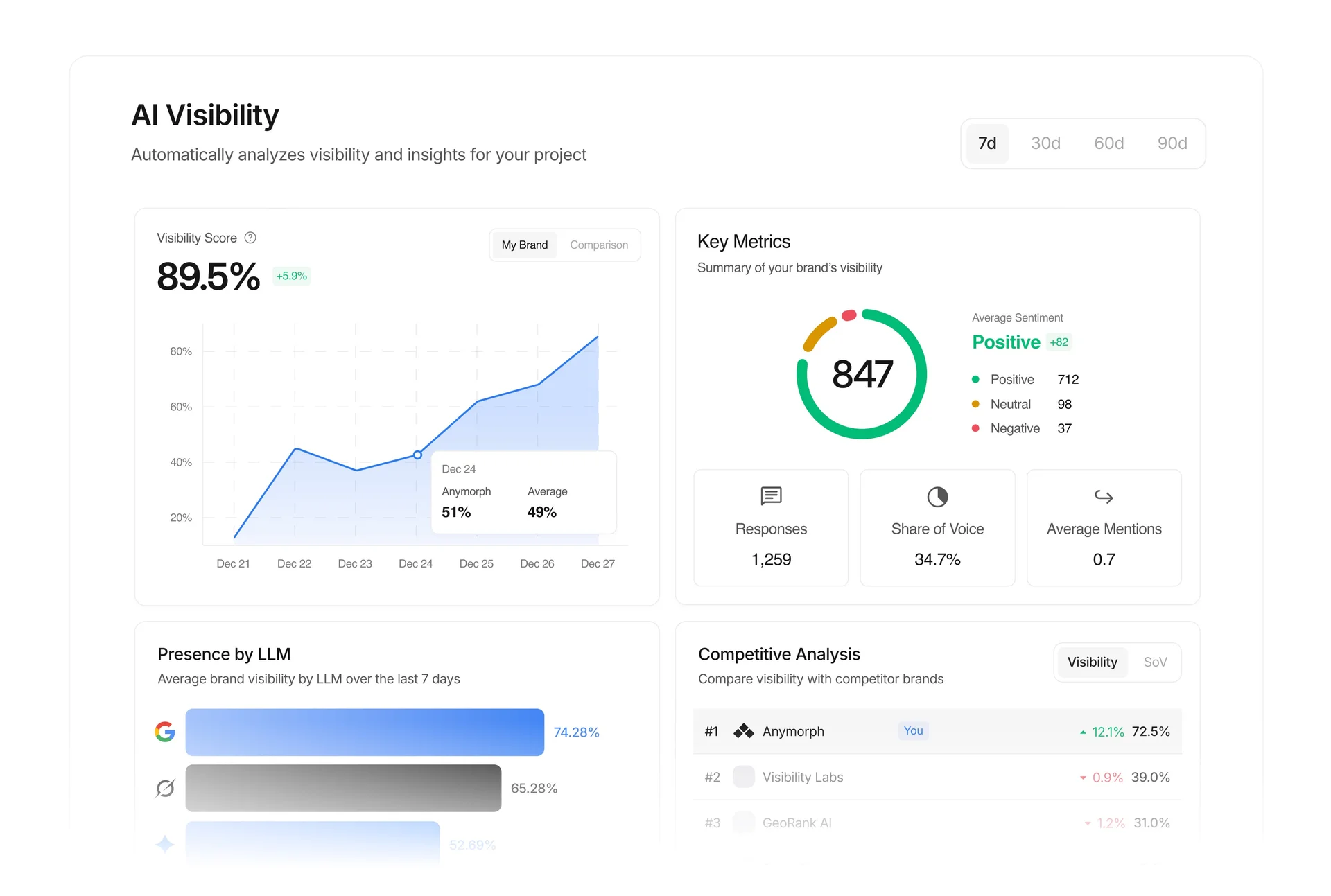

Core Metrics for AI Visibility Success

Measuring AI visibility success requires tracking your visibility rate, rank position, sentiment score, citation sources, and cross-platform presence.

Visibility Rate

The absolute frequency with which your brand appears in AI responses for targeted keywords. The baseline metric for determining whether the model knows your brand exists.

Rank Position

When the AI provides a listicle or comparison table, rank position measures your brand's placement relative to competitors.

Sentiment Score

Evaluates whether the AI uses positive, neutral, or critical framing when describing your specific product features.

Citation Sources

Identifies the exact external websites the AI references to validate its claims about your brand. Knowing which domains influence the model allows you to target those specific sites for PR campaigns.

Share of Voice (SOV)

Measures your total brand presence against your entire competitive set across multiple generative platforms, including ChatGPT, Gemini, and Perplexity.

To build a reliable reporting dashboard, teams must integrate these exact metrics. Explore the technical requirements in our breakdown of AI Visibility Analytics: Features to Look For and learn How to Measure Share of Voice in AI Search.

Detecting and Preventing AI Hallucinations

An AI hallucination occurs when a generative model fabricates information with absolute confidence. When these hallucinations involve corporate entities, the results can be catastrophic for pipeline and reputation. Common brand hallucinations include AI models falsely claiming a business has permanently closed or incorrectly stating that a core product feature has been deprecated.

To proactively prevent hallucinations, brands are increasingly utilizing advanced JSON-LD schema architecture and maintaining pristine Wikidata entries. This process, known as strengthening "retrievability," explicitly feeds structured, machine-readable facts directly to the crawlers that index data for these models.

Specialized Monitoring Tools (2026)

-

SE Visible: Best for tracking long-term strategic sentiment trends across extended periods.

-

Xofu: Specializes in bottom-of-funnel citation tracking to see exactly which external links are driving AI recommendations.

-

Scrunch AI: Focuses explicitly on narrative management and factual accuracy, providing alert systems for brand misinformation.

Building a Repeatable AI Monitoring Workflow

Securing a stable presence in generative engines requires treating GEO as a continuous operational process rather than a one-time audit.

Prompt Library Construction

Construct a database of 20 to 50 high-intent prompts that accurately mirror how real customers ask about the industry. These should range from broad category queries to specific bottom-of-funnel comparisons.

Weekly Audits

Execute weekly automated runs of your entire prompt library. This frequency is necessary to monitor the "return speed" of brand mentions, calculating exactly how long it takes for a brand to recover visibility after a model update.

Cross-Platform Analysis

Compare responses between ChatGPT's parametric memory outputs and Perplexity's citation-heavy results. Identifying discrepancies highlights specific gaps in your digital footprint.

For a deeper dive into the specific technology stacks required to run this methodology, review our breakdown of the Best Generative Engine Optimization (GEO) Tools for SaaS Brands (2026).

Optimize Your Generative Engine Presence

Anymorph delivers the complete analytics infrastructure required to monitor and optimize your generative brand narrative across all major AI engines. Reduce the time required to detect and correct factual inaccuracies from weeks to under 48 hours.

Frequently Asked Questions

What is Generative Engine Optimization (GEO)?

Generative Engine Optimization (GEO) is the practice of managing and improving a brand's visibility, accuracy, and sentiment within AI-driven search engines like ChatGPT, Perplexity, and Google's AI Overviews. Unlike traditional SEO which optimizes for web page rankings, GEO optimizes for entity inclusion and factual accuracy within direct conversational responses.

How often should I check my brand visibility in ChatGPT?

Because generative models exhibit high volatility and update frequently, you should audit your core priority prompts at least once a week. Monthly checks are insufficient, as visibility can drop by 80% over just five consecutive prompt runs.

Can I directly fix an incorrect claim an AI engine makes about my product?

You cannot directly edit an AI's parametric memory. However, you can fix inaccuracies by updating your site's JSON-LD schema, correcting your corporate Wikidata entries, and publishing authoritative PR content that clearly states the correct facts, which the AI models will eventually ingest during their next data crawl.

Why does Perplexity cite different sources than Google AI Overviews?

Perplexity uses a strict retrieval-first (RAG) architecture that heavily prioritizes live web documents, authoritative news outlets, and explicit links to formulate its answers. Google AI Overviews blends its massive traditional search index with its proprietary Gemini models, leading to different source prioritization based on Google's historical ranking algorithms.