How to Measure Share of Voice in AI Search

As of 2026, measuring AI search share of voice requires tracking both baseline citation frequency and high-intent recommendation rates.

With the performance gap between top-tier language models shrinking to a mere 5.4%, brands must transition from traditional SEO reporting to Generative Engine Optimization (GEO) metrics to capture and measure commercial visibility accurately.

What is AI search share of voice?

AI search share of voice is the percentage of measurable brand visibility across conversational engines like ChatGPT, Perplexity, and Google AI Overview.

For the last decade, digital marketers measured market share based on blue links and keyword search volume. However, the rapid adoption of Generative Search has fundamentally altered how users discover products and services. When a consumer or B2B buyer asks a complex question, modern engines do not just provide a list of websites; they synthesize an answer, pulling data from across the web using Retrieval-Augmented Generation (RAG).

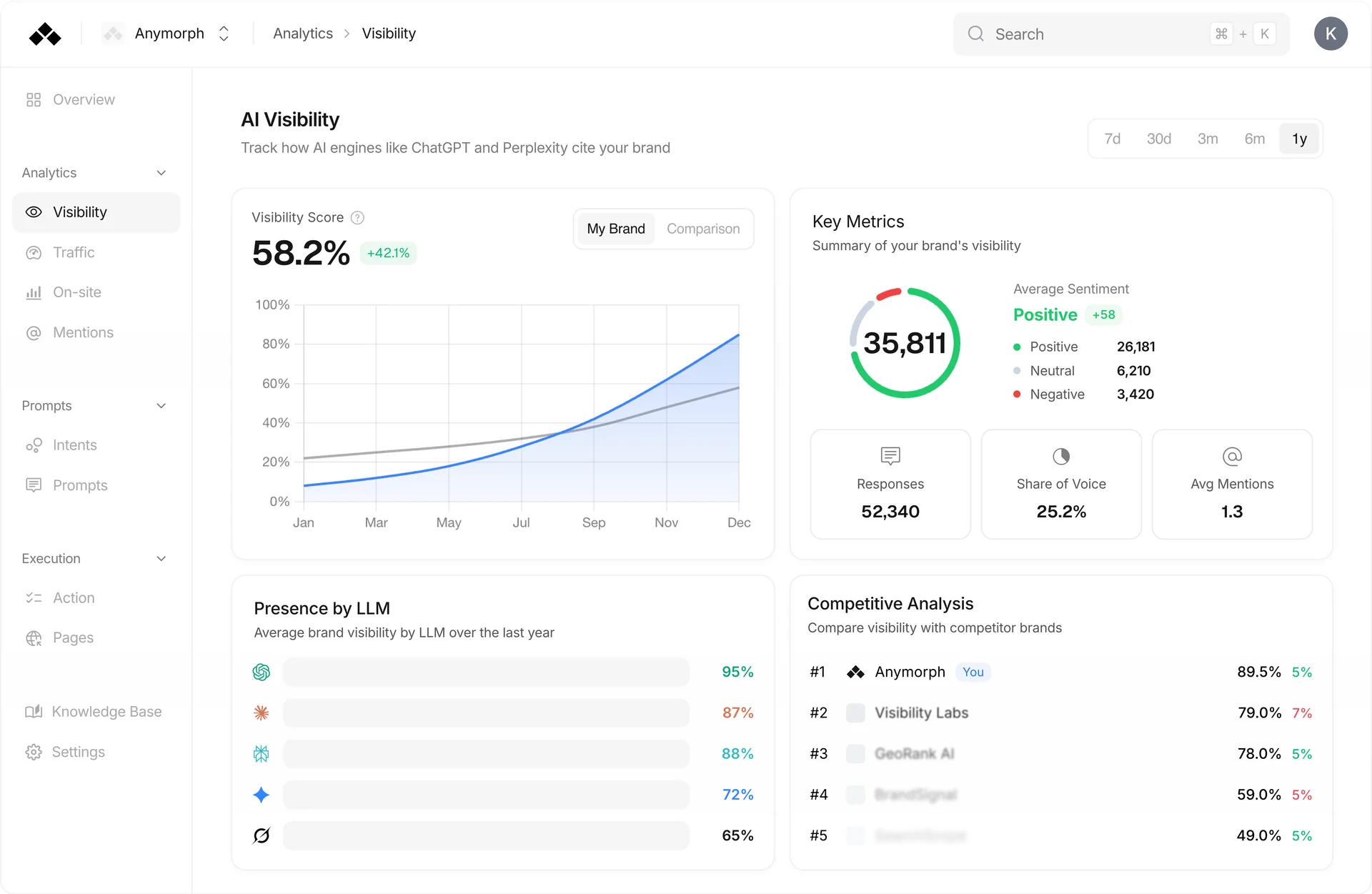

Anymorph analysis shows that AI search share of voice requires a completely different measurement framework than traditional search engines. Instead of tracking a single domain's ranking for a specific keyword, AI visibility tracking evaluates how often a brand's data, products, and positioning are retrieved and synthesized into an AI's conversational output.

Measuring this visibility accurately means looking across the fragmented ecosystem of major Large Language Models (LLMs)—including OpenAI's GPT series, Anthropic's Claude, xAI's Grok, and Google's Gemini. Because these models formulate answers dynamically based on user prompts and real-time web retrieval, tracking your share of voice is the only reliable way to understand if your brand is genuinely part of the modern buyer's research journey.

Mentions vs. Recommendations

A brand mention lists your company in general context, while a recommendation explicitly endorses your product as the best solution for a specific query.

Brand Mentions

A brand mention occurs when an AI engine includes your brand name in a broader list, directory, or descriptive context without explicitly positioning it as the ideal choice.

- General Research / Awareness Intent

- Low to Medium Conversion Value

- Contributes to Citation Frequency

Brand Recommendations

A brand recommendation occurs when the AI acts as an advisor, specifically suggesting your brand as the "best," "most optimal," or "top-rated" option for the user's specific use case.

- High Commercial Intent / Decision

- High Conversion Value

- Drives Recommendation Rate

Why Traditional SEO Metrics Fail for AI Search

Marketing teams attempting to use legacy SEO rank trackers for AI search quickly run into systemic roadblocks. The standard benchmarks that worked for Google's traditional ten blue links are actively failing in the generative era.

Non-Standard Prompting

In traditional SEO, users type fragmented keywords. In generative search, users write complex, highly specific prompts. Slight variations in these conversational queries can entirely change the retrieval parameters, meaning a brand might be mentioned for one prompt variation but ignored in another.

Score Inflation

Standard accuracy benchmarks are becoming unreliable as many models "game" their training to pass standardized tests. Relying on basic presence metrics without evaluating context leads to score inflation, providing a false sense of brand safety.

Attribution Complexity

Traditional search provides clear click-through rates (CTR). AI engines synthesize answers from multiple sources simultaneously. Enterprise stakeholders require entirely new, robust attribution models to understand exactly which piece of content drove a specific citation.

The Impact of Model Convergence

In the early days of Generative AI, a brand's share of voice varied wildly depending on which engine a consumer used. GPT-4 might give excellent answers, while older models hallucinated brand information entirely. This era is officially over.

The intelligence of these conversational engines is rapidly converging.

Because the engines themselves are uniformly intelligent, your AI share of voice is no longer bottlenecked by the AI's ability to understand your brand. Instead, it is entirely bottlenecked by the structure, availability, and quality of your brand's data across the web. If you are not being cited, it is a content formatting problem, not an AI comprehension problem.

Factors Driving a Higher Share of Voice

Brands actively transitioning from SEO to GEO must focus on a specific set of operational levers that directly influence how Large Language Models interpret, retrieve, and prioritize brand information. Generative Engine Optimization relies on foundational elements like clear structured data:

Clear Structured Data

LLMs rely heavily on easily parsable data when running RAG operations. Ensuring that technical documentation, product specifications, and feature comparisons are meticulously organized with structured data (JSON-LD) and semantic HTML ensures that crawlers can digest the exact parameters of your product without confusion.

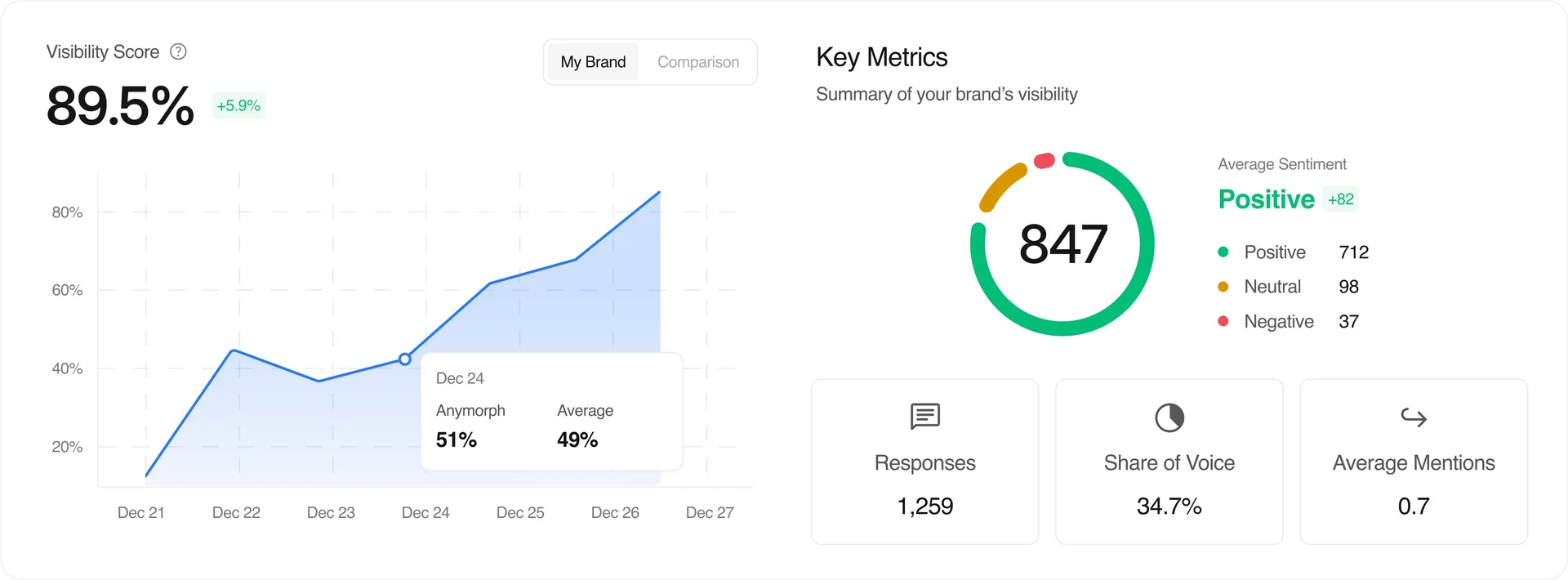

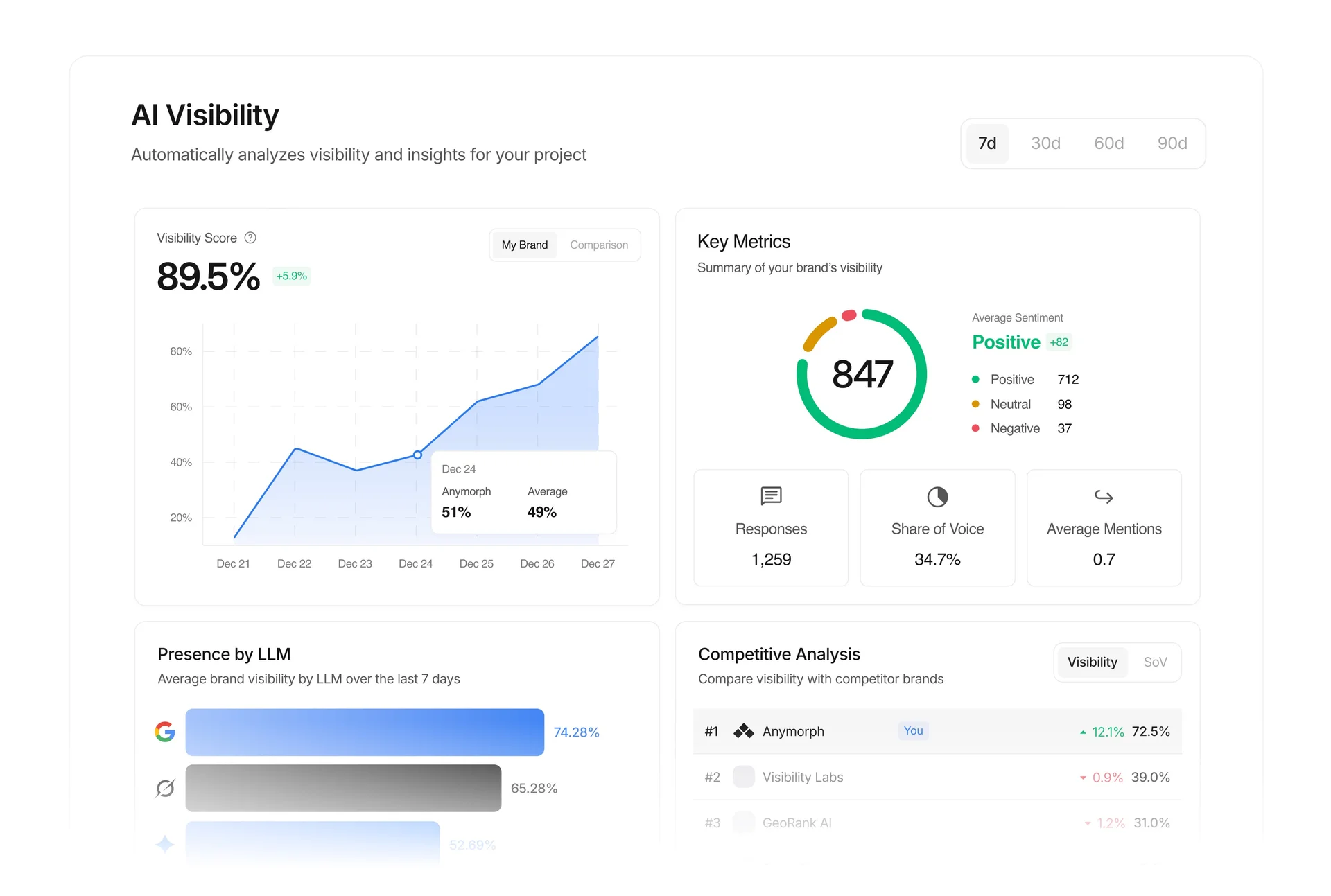

Presenting AI Search Data to Stakeholders

One of the most frequent challenges marketing leaders face is communicating the value of Generative Engine Optimization to executive boards. It is critical to move past raw impression metrics and adopt standardized reporting templates.

Learn more about structuring your reporting in our 90-Day GEO Strategy for SaaS.

Book a demoSentiment and Contextual Accuracy

Simply tracking brand name appearances is insufficient. Stakeholders need to know the context. Is the brand being mentioned positively as an industry leader, neutrally as a historical footnote, or negatively? Contextual sentiment reporting provides immediate insight into brand health.

Citation Quality vs. Quantity

AI citation reporting must weight the authority of the engine and the prominence of the placement. A single, dedicated recommendation inside a deep-dive Perplexity "Pro" search carries significantly higher commercial value than ten brief mentions in a lower-tier chatbot session.

The Recommendation Gap

The most critical metric for the C-suite. Closing this gap is the primary KPI of a successful GEO campaign.

Frequently Asked Questions

How do AI search engines like Perplexity distinguish mentions from recommendations?

Perplexity and similar AI engines utilize Retrieval-Augmented Generation (RAG) to pull real-time data from top-ranking web sources. If the retrieved source material simply lists your brand within a generic directory or article, the AI translates that context into a passive mention. However, if the source material heavily positions your brand as a "top pick," "best software," or pairs your brand with highly positive sentiment and specific use-case solutions, the AI synthesizes that data into an active, high-intent recommendation for the user.

What tools can measure brand citation frequency in AI-generated content?

Currently, specialized Generative Engine Optimization (GEO) tools, autonomous OS systems like Anymorph, and manual prompt auditing frameworks are used to track brand citations. These tools work by deploying standardized user personas and running concurrent prompt variations across multiple LLMs to map exactly how often and in what context a brand appears in the generated outputs.

What is a good AI search share of voice benchmark for my industry?

Given that the intelligence gap between the top 10 models has shrunk to only 5.4%, a strong, industry-leading Share of Voice should be highly consistent across the board.

How do citation quality metrics in AI compare to academic citations?

In academic publishing, a citation's value is heavily weighted by the reputation of the citing journal and the context of the reference. AI search operates on a remarkably similar principle. A mention buried in a list of twenty tools is equivalent to a minor academic footnote. Conversely, being the sole brand highlighted in a dedicated Google AI Overview or a Perplexity Pro response is equivalent to a primary citation in a top-tier journal—it carries vastly more authority, trust, and commercial weight.

Track and Optimize Your AI Search Visibility

Scaling AI visibility cannot be managed manually through spreadsheets and ad-hoc prompt testing. Anymorph is an autonomous platform and engine designed for Generative Engine Optimization (GEO).