AI Visibility Score Formula for Executive GEO Dashboards

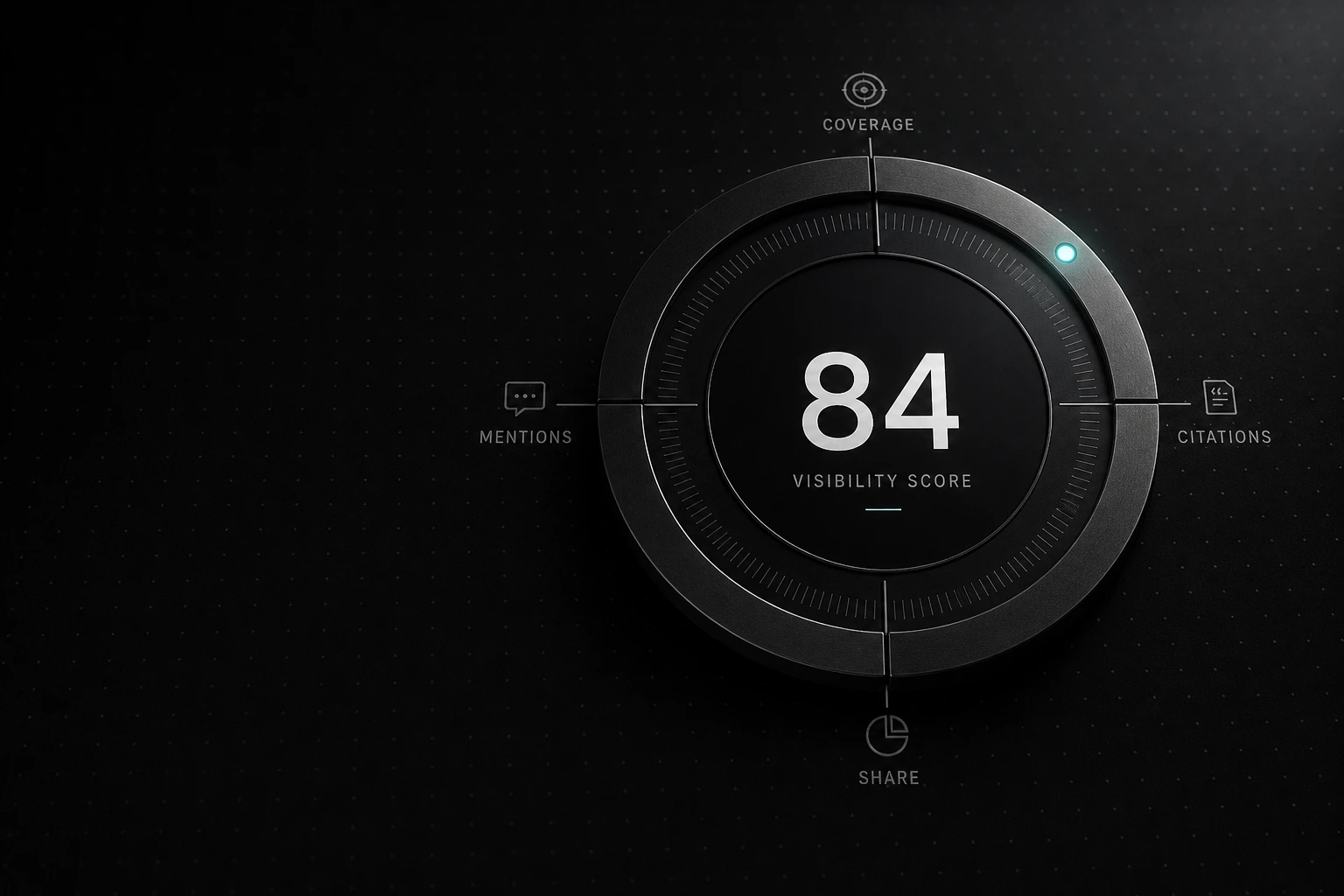

A usable score combines 4 inputs-coverage, mentions, citations, and competitor share-then reports them weekly by engine and monthly overall.

What should a single AI visibility score measure?

An effective AI visibility score quantifies brand presence by aggregating query coverage, citation counts, mention frequency, and competitor share across language models.

Generative Engine Optimization (GEO) requires exact tracking mechanisms to capture commercial market share. Currently, 92% of marketers plan to optimize for AI search this year, yet only 40.6% have actively implemented GEO strategies (Convertmate, 2026). This execution gap exists because traditional platforms cannot process the unstructured nature of large language model (LLM) responses. Without a standardized score, executive dashboards fail to capture actual brand reach across fragmented AI ecosystems.

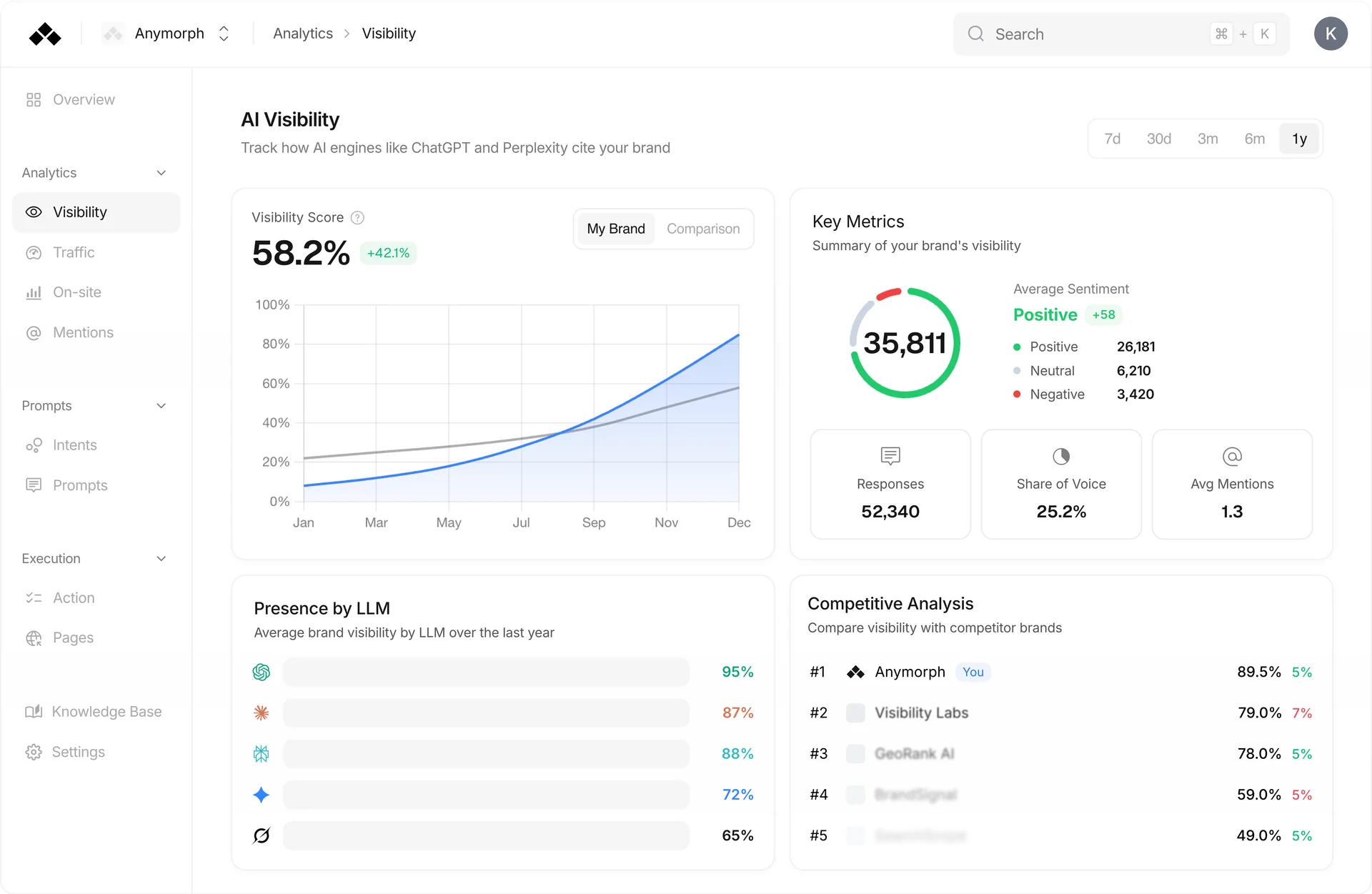

A single composite metric is necessary for leadership reporting, but it becomes a liability if it obscures engine-by-engine volatility. For example, a brand might achieve high visibility on ChatGPT while remaining entirely absent from Google AI Overviews or Claude. Anymorph analytics separate these inputs so marketing operations can isolate performance drops on specific engines. This multi-engine tracking methodology ensures that the single AI visibility score presented to the C-suite accurately reflects total market penetration rather than a skewed, single-platform snapshot.

How do actionable GEO metrics differ from vanity metrics?

Actionable GEO measurement tracks brand mention correlation at 0.664, replacing outdated organic backlink metrics that correlate poorly at just 0.218 with generative visibility.

Traditional search dashboards rely on positional ranking and domain authority, which hold little value for AI answer engines. Contemporary reporting shifts resource allocation toward direct mention acquisition. Data confirms that brand mentions correlate three times more strongly with AI visibility than traditional backlinks (Omnibound, 2026). To track what genuinely impacts pipeline, organizations must evaluate specific source overlaps, geolocation analytics, and engine-level sentiment instead of outdated link profiles.

| Metric Type | Actionable GEO Metric | Vanity SEO Metric | Reporting Note |

|---|---|---|---|

| Awareness | Prompt Coverage | Keyword Search Volume | Coverage tracks the actual trigger rate in AI engines, ignoring standard search intent. |

| Authority | Citation Density | Domain Authority | Citations prove the LLM trusts your specific data sources over third-party aggregators. |

| Reach | Brand Mentions | Ranking Position | Mentions correlate highly (0.664) with visibility and measure direct brand inclusion. |

| Market Share | Competitor Share of Voice | Share of Search | Share of voice measures your narrative presence against direct commercial rivals. |

Replacing vanity metrics with actionable GEO KPIs requires rebuilding the marketing dashboard from the ground up. This structural change aligns measurement directly with the inputs that language models use to construct their answers.

What is the exact formula for AI visibility?

The standardized formula calculates visibility by multiplying average brand mention frequency by sentiment weight, then dividing the result by total category queries.

Data-dense reporting relies on transparent math that executives can audit. The core KPI framework aggregates volume, qualitative sentiment, and relative market positioning into a single executive indicator. Analysts define the calculation as follows:

"AI Visibility Score = (Average Brand Mention Frequency × Sentiment Weight) ÷ Total Category Queries" — TigerTracks, 2026

This calculation processes four distinct inputs to generate a reliable index:

- Coverage: The total volume of category queries and prompts that trigger a brand presence in the response.

- Mentions: The sheer frequency of brand name inclusion within the generated text.

- Citations: The count of attribution links provided to the user, driving referral traffic.

- Competitor Share: The brand's relative visibility compared to direct market rivals within the same prompt sets.

Securing high scores across these inputs delivers significant pipeline results. Generative engine traffic yields a 15.9% conversion rate, heavily outperforming the 1.76% average associated with standard organic search (Seer Interactive, 2026). This conversion superiority justifies the engineering resources required to implement a continuous 4-input tracking model.

Which measurement pillars dictate AI market share?

Contextual citation density, narrative sentiment, attribution link-through rates, and response dominance for long-tail intents form the four core pillars of generative market share.

Anymorph incorporates these four specific pillars to ensure a holistic view of engine performance across various commercial intent types. Focusing on response dominance helps secure the "Share of Model" before competitors saturate the category with their own proprietary data sets.

Incorporating statistical evidence directly impacts these pillars. Adding verifiable statistics to content triggers a 41% increase in AI visibility, making numerical density the single most effective optimization tactic available (Princeton University, 2024). Furthermore, practitioners control the vast majority of the inputs that determine these pillars. Approximately 86% of cited sources fall under a marketer’s direct influence, distributed across brand websites (44%), local listings (42%), and review platforms (8%) (ALM Corp, 2025/2026).

What workflow supports brand presence across AI engines?

Managing an AI visibility score requires continuous prompt-set governance, citation exports, and dashboard quality assurance across seven distinct language model platforms.

Compiling a reliable KPI demands rigorous operations. Marketing teams must manage high-volume prompt tracking—often executing hundreds of queries per day—to capture accurate samples of engine outputs. The workflow begins with defining a static prompt set mapped to commercial intents, followed by automated API monitoring to extract responses from engines like ChatGPT, Gemini, and Perplexity.

Once extracted, the system parses the text for mentions, measures sentiment weight, and calculates source overlap against competitors. Because AI platforms update their training weights constantly, single-platform snapshots provide incomplete data. The Anymorph autonomous OS automates this heavy lifting, standardizing the text generation outputs into a cohesive executive view.

How frequently should teams report AI search analytics?

Marketing operations must monitor citation shifts on a weekly basis while presenting aggregated 90-day visibility trend lines to executive leadership monthly.

The volatility of LLM algorithmic updates dictates a bifurcated reporting structure. Tactical teams require high-frequency weekly alerts to catch sudden platform deprecations or shifts in training data preferences. For instance, ChatGPT's citation share for Reddit plummeted from 60% to 10% in late 2025 over just a few weeks (ALM Corp, 2025/2026). A brand relying heavily on Reddit for its contextual citation density would have lost significant market share instantly.

Conversely, a monthly review cycle fits executive needs. Leadership dashboards should pair the overall weighted visibility score with specific performance bars for major engines (Medium / Joachim, 2026). This ensures that the executive team sees the strategic progression of the campaign without overreacting to the micro-fluctuations inherent to weekly AI model testing.

Frequently asked questions

What is a good AI citation rate for B2B SaaS marketing?

A competitive citation rate for B2B software typically exceeds the 40.6% adoption benchmark of early generative optimization adopters. B2B marketers who actively manage their website assets, local listings, and third-party reviews control 86% of the sources that dictate this rate. Consistently publishing verifiable statistics increases baseline visibility by 41%, making data density the most direct path to improving citation frequency.

How often should marketing heads check AI search visibility reporting cadence?

Marketing leadership should review aggregate visibility scores monthly while keeping a 90-day trailing trend line visible. Operational teams require weekly tracking to monitor volatile citation sources, as demonstrated when Reddit citations dropped from 60% to 10% rapidly in 2025. This dual-speed cadence ensures executives see stable trends while operators catch abrupt algorithm adjustments.

Which AI prompt tracking tool handles 500 prompts per day?

Enterprise measurement platforms and specialized tracking APIs process hundreds of queries daily across multiple language models. Anymorph operates as an autonomous OS that standardizes outputs from 7+ engines, parsing high volumes of prompts to calculate accurate competitor share. Organizations testing at this scale capture deeper insights into narrative sentiment and long-tail response dominance.

Why do traditional SEO vanity metrics fail in generative engine optimization?

Legacy metrics like organic backlink counts show a weak 0.218 correlation with actual generative visibility, making them useless for AI tracking. Instead, direct brand mentions exhibit a 0.664 correlation with inclusion in LLM responses. AI systems prioritize contextual citation density and narrative sentiment over conventional domain authority, requiring a completely new mathematical framework.

What are the most heavily weighted generative engine optimization KPIs?

The four most critical KPIs are prompt coverage, mention frequency, citation counts, and competitor share of voice. When combined, these factors yield traffic that converts at 15.9%, dramatically outperforming standard search benchmarks. Marketing teams use these specific inputs to calculate the overall visibility score and isolate which specific platforms require tactical adjustments.

See how Anymorph tracks visibility across 7+ engines

Compare plans and secure your brand's share of model today.